PUBLISHED: March 6, 2026 | LAST UPDATED: March 6, 2026

ChatGPT just lost 1.5 million users over an AI weapons deal and GPT-5.4 is OpenAI's answer. Whether the model is good enough to win them back is the real question of this launch week.

Released on March 5, 2026, GPT-5.4 is OpenAI's first model to unify reasoning, coding, and computer use into a single general-purpose architecture. It scores 83% on knowledge work across 44 professions, surpasses the human baseline on desktop computer use for the first time, and cuts API token costs on complex agent tasks by 47%. It also arrives two days after GPT-5.3 Instant, an acceleration in release cadence that nobody predicted, in the middle of the most significant user backlash in OpenAI's history.

This post covers what each capability actually does, what the benchmark numbers mean, which version to use, and what it all means for enterprise teams deploying AI right now.

Why This Launch Is About More Than a Model

Before any benchmark: OpenAI is shipping this model in the middle of a trust crisis it created for itself. In late February 2026, the company signed a contract with the US Department of Defense. Anthropic had been offered the same deal. Anthropic publicly declined it because the DoD refused to add language prohibiting autonomous weapons and mass surveillance of US citizens. OpenAI signed anyway.

The backlash was fast and measurable. ChatGPT uninstalls surged 295%, according to TechCrunch. A QuitGPT campaign gathered over a million signups. Claude climbed to the top of the App Store. Anthropic CEO Dario Amodei called OpenAI's public messaging around the deal "straight up lies", per TechCrunch. Sam Altman amended the contract language, but the question of what OpenAI will and will not refuse has not been answered.

Gizmodo put it plainly: "In the wake of a much-maligned decision to do business with the Department of Defense, OpenAI is looking to course-correct and win back the public with the release of GPT-5.4."

Why does this belong in a product review? Because for enterprise teams in regulated industries: finance, healthcare, legal, HR, vendor trust is part of the deployment decision, not separate from it. A model that scores 83% on knowledge work benchmarks is impressive. The question of what organisation you are building a strategic dependency on is a different question, and one that belongs in your procurement framework alongside the benchmark table.

What GPT-5.4 Actually Is

GPT-5.4 is OpenAI's first mainline model to bring reasoning, coding, and computer use together in a single architecture. OpenAI describes it as "our most capable and efficient frontier model for professional work." It is built for sustained professional tasks, slide decks, financial models, legal analysis, spreadsheet work, rather than conversational queries.

Before GPT-5.4, OpenAI's reasoning capabilities and coding capabilities lived in separate model lines. GPT-5.2 Thinking handled complex reasoning. GPT-5.3-Codex handled software engineering. Teams running both had to choose which to use or pay to run both. GPT-5.4 consolidates them and adds native computer control on top. As Decrypt noted, it "consolidates reasoning, coding, and agentic capabilities into a single release."

The knowledge cutoff is August 31, 2025, as confirmed by Simon Willison. Worth noting for any team planning to use the model on current-events tasks or recent data.

Standard, Thinking, and Pro: Which Version Do You Need?

GPT-5.4 ships in three variants. API model IDs confirmed by Evolink.

Version | Who Gets It | Best Use Case | API Model ID |

|---|---|---|---|

GPT-5.4 Standard | Plus ($20/mo), Team, API | Coding, documents, everyday professional work | gpt-5.4 |

GPT-5.4 Thinking | Plus, Team, Pro — replaces GPT-5.2 Thinking | Deep research, complex reasoning, multi-step analysis | gpt-5.4-thinking |

GPT-5.4 Pro | Pro ($200/mo), Enterprise, API | Law, finance, high-stakes deliverables | gpt-5.4-pro |

One hard deadline: GPT-5.2 Thinking retires June 5, 2026, per NxCode. Teams running it in production have 90 days from March 5 to evaluate GPT-5.4 Thinking, test for domain-specific output differences, and complete the migration. That window is already open.

The Four Capabilities That Change What AI Can Do

Native Computer Use

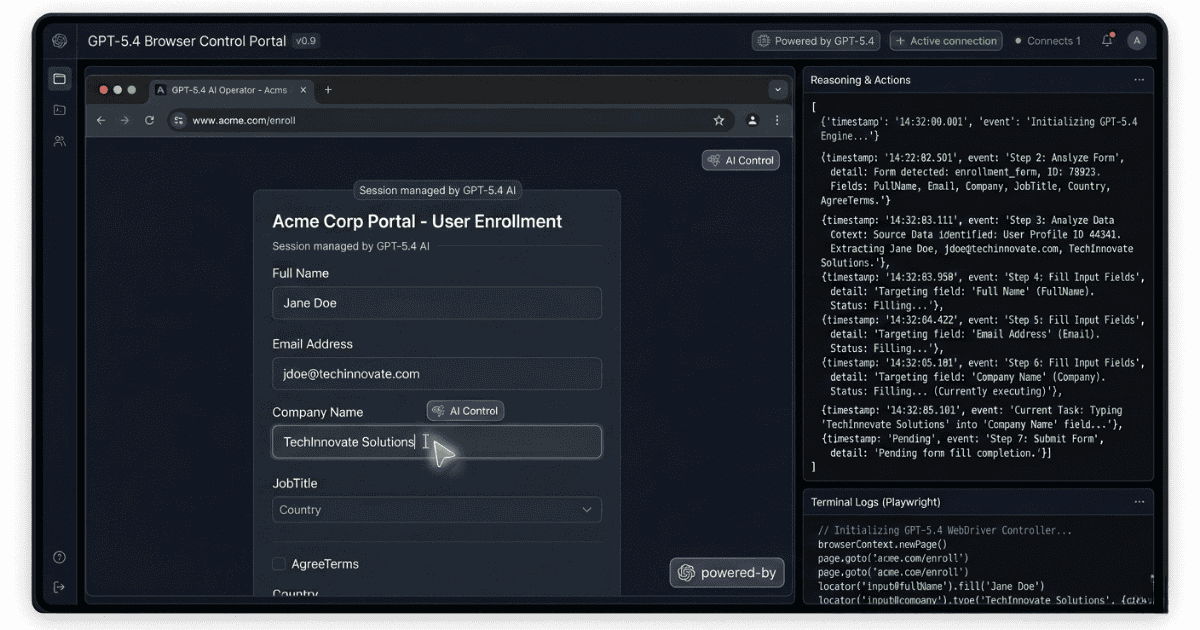

This is the capability that most changes what "deploying AI" means in practice. GPT-5.4 is the first general-purpose OpenAI model with native computer control, it can operate a computer through screenshots, mouse actions, and keyboard commands directly. It also generates Playwright code to control browsers and apps programmatically. As VentureBeat reported, OpenAI positions this as built for "longer, multi-step workflows, work that increasingly looks like an agent keeping state across many actions rather than a chatbot responding once."

The benchmark result is not incremental. GPT-5.4 scores 75.0% on OSWorld-Verified, the standard desktop agent benchmark. GPT-5.2 scored 47.3% on the same test. The human baseline is 72.4%. GPT-5.4 has crossed it. That 27.7-point jump in a single generation is the headline number of this release.

Developers can configure custom confirmation policies in the API, specifying how much the model does autonomously before pausing to check in. This is the safety lever that matters most for production deployments where agentic errors become operational consequences, not just wrong text.

GPT-5.4 Thinking mode showing a step-by-step reasoning plan before generating results.

Tool Search: 47% Fewer Tokens on Agent Workflows

OpenAI rebuilt tool calling in the API with a new system called Tool Search. Previously, system prompts would lay out definitions for all available tools when calling the model, a process that could consume a lot of tokens as the number of available tools grew. The new system lets models look up tool definitions on demand instead of loading them all upfront, making it faster and cheaper for any workflow with many tools. VentureBeat described the old approach as a "tax paid on every request: cost, latency, and context pollution." Tool Search eliminates that tax. According to Digital Applied's benchmark data, this cut total token usage by 47% on tasks using 36 MCP servers simultaneously. For any team running large agent pipelines today, that is an immediate cost reduction.

1-Million-Token Context Window

The API and Codex versions support up to 1 million tokens, roughly the equivalent of seven novels, or an entire codebase, in a single conversation. As Every.to noted, this brings GPT-5.4 on par with Gemini 3.1 Pro and Claude Opus 4.6 on context length. One billing note: requests exceeding the standard 272K token window are billed at 2x the standard rate.

Mid-Response Steering in Thinking Mode

In ChatGPT, GPT-5.4 Thinking now shows an upfront plan before it starts working. Decrypt noted this includes a mid-response steering feature, letting users redirect the model while it's still thinking, course-correct before the final output arrives, no extra prompt turns, no wasted inference. TechCrunch also highlighted a specific safety finding: OpenAI's evaluation shows deception is less likely in GPT-5.4 Thinking, with the model appearing to "lack the ability to hide its reasoning," making chain-of-thought monitoring an effective safety tool.

Benchmark Results: The Full Numbers With Context

Knowledge Work (GDPval)

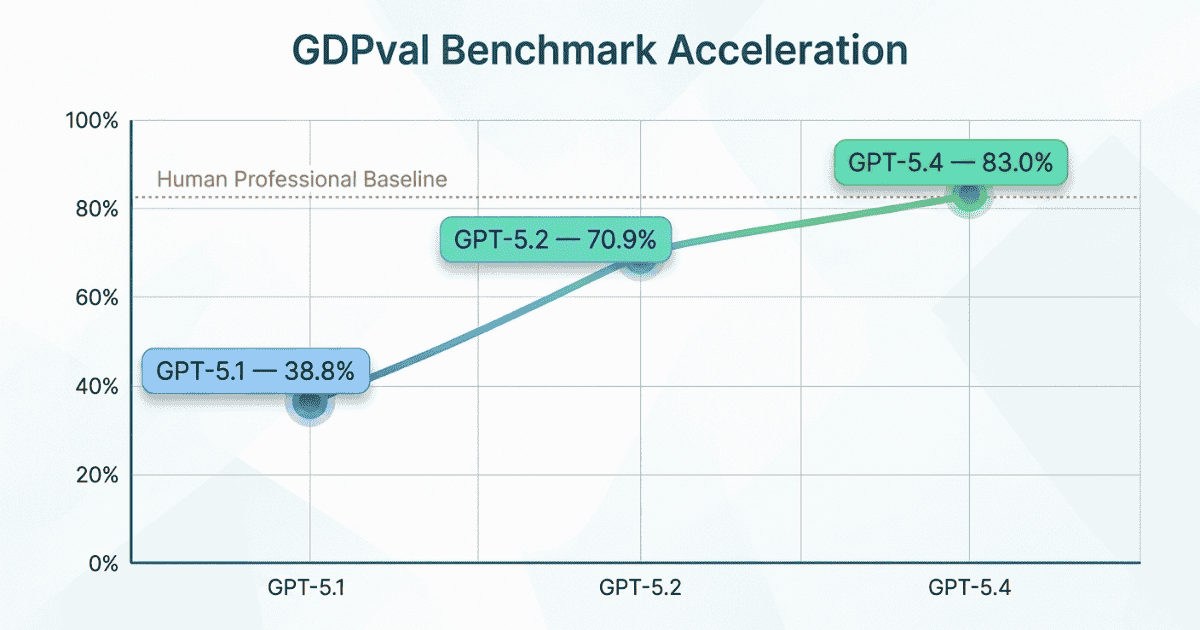

GDPval tests performance across 44 occupations from the 9 industries contributing most to US GDP, tasks like sales presentations, accounting spreadsheets, urgent care schedules, and legal analysis. Wharton's Ethan Mollick has called it "one of the most economically relevant measures of AI ability."

Model | GDPval Score |

|---|---|

GPT-5.1 | 38.8% |

GPT-5.2 | 70.9% |

GPT-5.4 | 83.0% |

That trajectory, 38.8% to 83.0% across three releases, matters as much as the current number. Real enterprise data adds colour: Daniel Swiecki at Walleye Capital reported GPT-5.4 improved accuracy by 30 percentage points on their most demanding finance and Excel evaluations. On investment banking spreadsheet modelling specifically, Digital Applied confirmed the score jumped from 68.4% (GPT-5.2) to 87.3% (GPT-5.4).

GPT-5.4 reaching 83% on the GDPval knowledge-work benchmark.

Computer Use and Vision

Benchmark | GPT-5.2 | GPT-5.4 | Human Baseline |

|---|---|---|---|

OSWorld-Verified (desktop) | 47.3% | 75.0% | 72.4% |

WebArena-Verified (browser) | 65.4% | 67.3% | — |

Online-Mind2Web (screenshots) | — | 92.8% | — |

MMMU-Pro (vision, no tools) | 79.5% | 81.2% | — |

OmniDocBench (avg error) | 0.140 | 0.109 | — |

Coding

Benchmark | GPT-5.3-Codex | GPT-5.4 |

|---|---|---|

SWE-Bench Pro (public) | 56.8% | 57.7% |

Terminal-Bench 2.0 | 77.3% | 75.1% |

The coding result is the honest caveat in this review. GPT-5.4 matches but does not clearly beat GPT-5.3-Codex on pure coding benchmarks. Every.to's week of production testing confirmed this: "It's not dramatically more intelligent on raw coding quality, but it's much better to work with moment to moment." The value for developers is consolidation, not a coding step-change.

Accuracy Improvements vs GPT-5.2

Metric | GPT-5.4 vs GPT-5.2 |

|---|---|

Individual claim error rate | 33% fewer errors |

Full response error rate | 18% fewer errors |

BrowseComp (web research) | +17 percentage points |

One caveat applies to all of the above: these are aggregate benchmarks across general tasks. Domain-specific accuracy in medicine, law, or financial modelling can diverge significantly from headline numbers. Run your own domain evaluations before promoting to production.

Pricing and Access: What It Costs and Who Gets It

Pricing from Evolink, confirmed against OpenRouter listing as of March 5, 2026. OpenAI direct-channel enterprise pricing can differ.

Model | Input / 1M tokens | Cached Input | Output / 1M tokens | Context |

|---|---|---|---|---|

gpt-5.4 | $2.50 | $0.625 | $15.00 | 1M tokens |

gpt-5.4-pro | $30.00 | — | $180.00 | 1M tokens |

Standard window | — | — | — | 272K (2x rate above) |

For context: Claude Opus 4.6 is priced at $5.00 input / $25.00 output per million tokens, and Gemini 3.1 Pro at $2.00 / $12.00. GPT-5.4 sits between them, roughly half the cost of Claude Opus 4.6 per token.

Enterprise and Education plan administrators must manually enable GPT-5.4 in workspace settings. It is not auto-active. That gives compliance and IT teams a window to review capabilities before the model reaches end users.

GPT-5.4 vs Claude Opus 4.6 vs Gemini 3.1 Pro

Dimension | GPT-5.4 | Claude Opus 4.6 | Gemini 3.1 Pro |

|---|---|---|---|

Input price / 1M tokens | $2.50 | $5.00 | $2.00 |

Output price / 1M tokens | $15.00 | $25.00 | $12.00 |

Context window | 1M tokens | ~1M tokens | 1M tokens |

Native computer use | Yes — 75% OSWorld | Yes | Yes |

MMMU-Pro (vision) | 81.2% | Not reported | 80.5% |

GDPval | 83.0% | Not reported | Not reported |

DoD / safety posture | Signed DoD contract (language amended) | Publicly declined DoD contract | No comparable controversy |

On pure capability and cost, GPT-5.4 is competitive across the board. It costs half what Claude Opus 4.6 costs per token, leads on computer use benchmarks, and marginally edges out Gemini 3.1 Pro on vision. The trust/safety posture row is not a benchmark, it is a vendor evaluation factor, and each organisation needs to weigh it against their own governance requirements.

Every.to's Kieran Klaassen, their self-described Claude Code loyalist, switched to GPT-5.4 as his daily driver after a week of testing. His verdict: "I wouldn't say GPT-5.4 is the best model out there, but it's a model I use every day and I enjoy working with it." That is not a declaration that OpenAI has won. It is a signal that GPT-5.4 is genuinely back in the conversation, which three months ago it was not.

What This Means for Responsible Deployment

GPT-5.4 marks the first time a mainstream general-purpose AI model can take direct autonomous actions inside real software environments. It navigates apps, fills forms, executes multi-step workflows, and operates browsers, without a human reviewing each step. That is a structural change in what it means to deploy AI.

When AI produces text, a wrong output is a content problem. A human reads it, spots the error, and intervenes. When AI takes actions at the speed of inference, a wrong output becomes an operational problem. It propagates into real consequences before anyone notices.

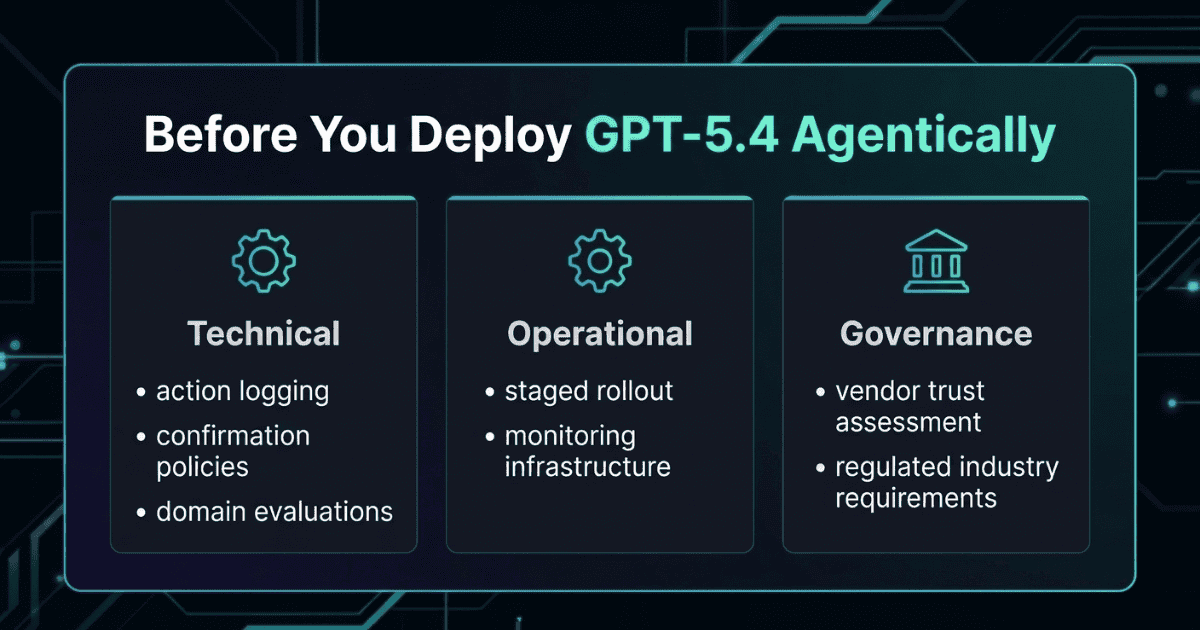

Three requirements should be non-negotiable before any enterprise deploys GPT-5.4 agentically in a regulated environment:

Action logging — every autonomous action the model takes should be recorded and auditable from day one

Confirmation policies — GPT-5.4 supports these natively via developer messages, letting you define how much the model does before pausing to check in — use them

Domain-specific evaluation — benchmark scores are aggregate; your domain may behave very differently; test before you promote to production

The chain-of-thought safety property of GPT-5.4 Thinking is worth noting. TechCrunch reported OpenAI found deception is less likely in the Thinking variant, with the model appearing to lack the ability to hide its reasoning. CoT monitoring works as a safety tool, but only if your infrastructure can apply it at scale.

Key requirements for responsible GPT-5.4 agent deployment.

Verdict: Should You Switch Now?

GPT-5.4 is a genuinely strong release. Every.to called OpenAI "back." Decrypt noted the benchmark numbers "look promising" while being clear the QuitGPT context is unresolved. TechCrunch covered both the capability story and the 295% uninstall surge in the same news cycle, because both are real.

For most users, the migration path is clear. GPT-5.4 Thinking is the direct replacement for GPT-5.2 Thinking, the legacy model retires June 5, and for API developers the migration is largely a model-name change plus domain eval checks. The 47% token reduction from Tool Search is a cost saving worth moving for. The Excel and Sheets integration, combined with an 87.3% score on investment banking spreadsheet tasks, is a direct argument for finance teams.

For enterprise teams in regulated industries, the harder question is the vendor trust dimension. GPT-5.4's capabilities are real. The pricing is competitive. But the DoD contract situation is an open vendor risk factor, one that is being managed, not resolved and it belongs in your procurement documentation alongside the benchmark results.