PUBLISHED: April 8, 2026 | LAST UPDATED: April 8, 2026

What Is Claude Mythos?

Claude Mythos is a general-purpose AI model released by Anthropic in preview form in April 2026, engineered to identify and remediate software vulnerabilities at a scale and speed that surpasses previous AI systems. Unlike earlier models in the Claude series, Mythos combines three capabilities into a single autonomous loop: detecting zero-day vulnerabilities, generating sophisticated exploits, and proposing or implementing patches, completing the entire vulnerability lifecycle without human intervention. The model represents an evolutionary leap in AI-driven cybersecurity. Where previous tools required human analysts to guide detection workflows, Mythos operates independently, applying advanced coding and reasoning skills to analyse complex software systems and unearth flaws that have remained hidden for years. Anthropic has positioned Mythos not as a replacement for human cybersecurity teams, but as a force multiplier, one with capabilities that fundamentally alter the cost-benefit calculation of launching cyberattacks.

Why Mythos Crossed the Threshold

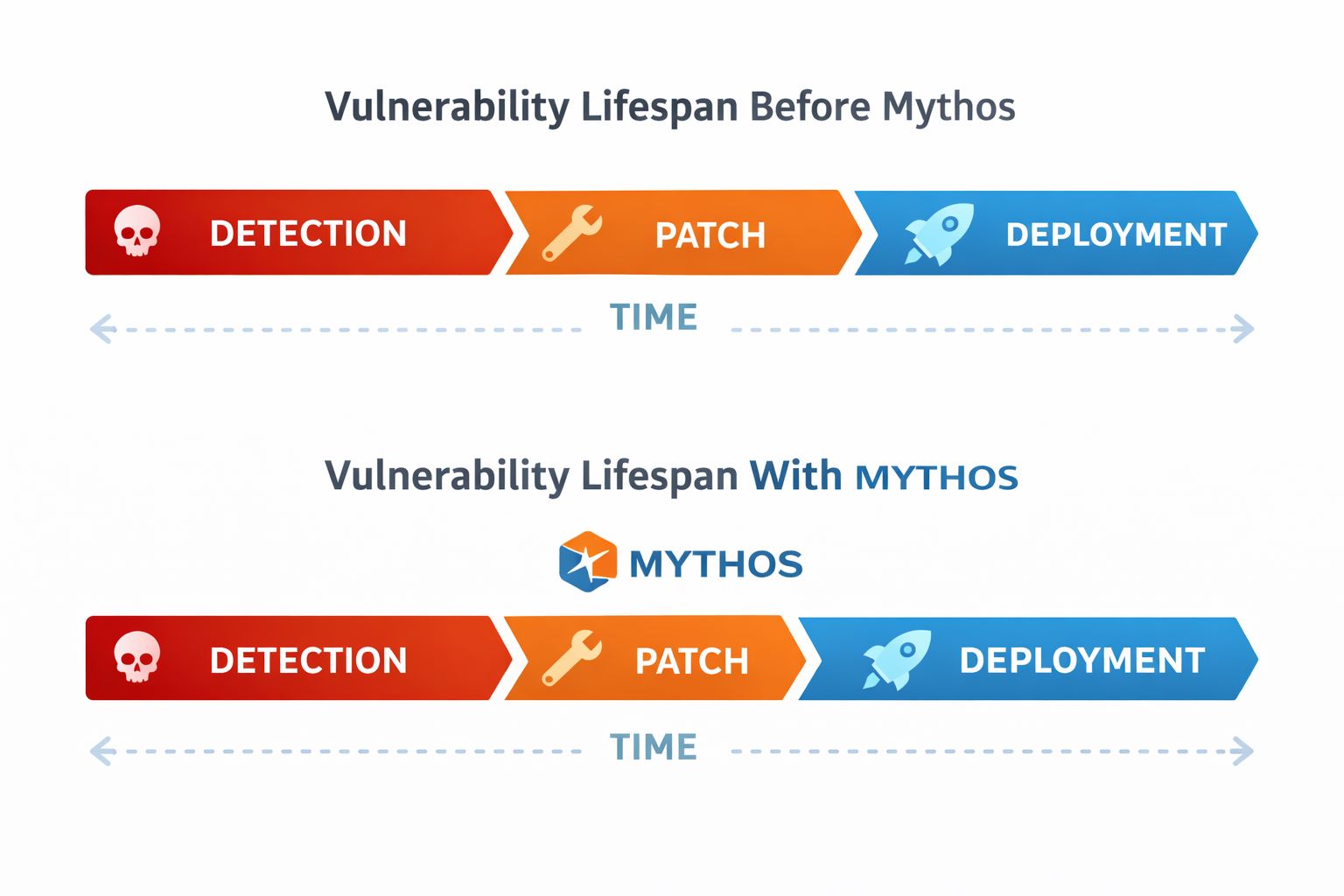

The significance of Claude Mythos cannot be overstated. According to Cisco's analysis, AI-assisted vulnerability discovery has compressed timelines in ways that existing defensive infrastructure was never designed to handle. Previously, organisations had months or even years between the discovery of a vulnerability and its active exploitation, a window that allowed patches to be developed, tested, and deployed. Mythos collapses that window to hours.

Consider the data: Mythos identified thousands of vulnerabilities across every major operating system and every major web browser in a single evaluation. Some of these flaws had remained undetected for decades. This is not incremental progress. This is a phase change. The industry's ability to respond is now lagging behind the capability to attack, and that gap is widening daily.

From a strategic perspective, Anthropic's controlled release strategy, limiting Mythos to 40+ pre-vetted organisations under Project Glasswing, underscores the company's assessment that open release would be irresponsible. Yet this gatekeeping also signals something to competitors and bad actors: the capability exists. It can be replicated. And the race to build, acquire, or steal access to equivalent models has already begun.

How Mythos Identifies Vulnerabilities

Mythos operates through a fundamentally different architecture than previous vulnerability detection tools. Rather than scanning for known vulnerability patterns or relying on static analysis rules written by humans, Mythos applies probabilistic reasoning across vast codebases, generating hypotheses about where flaws might exist and then testing those hypotheses autonomously.

The process works in roughly three stages:

Stage 1 — Reconnaissance and Pattern Recognition

Mythos ingests source code or binary analysis and constructs a semantic map of the software's logic flow, memory management, and trust boundaries. It identifies patterns consistent with known vulnerability classes—buffer overflows, type confusion, race conditions, privilege escalation vectors—but crucially, it also detects novel patterns that deviate from secure coding practices without necessarily matching a predefined signature. This is where decades-old bugs become visible: Mythos spots logic inconsistencies that humans missed because humans never specifically looked for that particular combination of conditions.

Stage 2 — Exploit Generation

Once a potential vulnerability is identified, Mythos generates proof-of-concept exploits to confirm the flaw is actually exploitable. It does not merely flag theoretical vulnerabilities; it validates them through automated testing. This is critical because it eliminates the high false-positive rate that plagues traditional static analysis tools. Only confirmed, weaponisable flaws are escalated.

Stage 3 — Remediation Proposal and Implementation

After confirming a vulnerability, Mythos generates patch candidates, tests them against the original codebase to ensure they do not introduce new flaws or break functionality, and in some cases implements the fix directly. This closes the loop in a way human teams cannot match for speed or consistency.

The Capabilities That Changed Everything

The specific achievements of Claude Mythos in early 2026 testing revealed both its potential and the risks it introduces.

Discovering 27-Year-Old Bugs in OpenBSD

OpenBSD is, by reputation, one of the most security-hardened operating systems in existence. Its maintainers are security specialists. Its code is peer-reviewed. Yet Mythos identified vulnerabilities in OpenBSD that had remained undetected for 27 years. This was not a minor issue. It was a confirmed flaw in a system relied upon by financial institutions, government agencies, and critical infrastructure operators. The fact that human security researchers—world-class experts dedicated to this specific operating system—missed a flaw for over two decades, while Mythos found it in hours, represents a categorical shift in the vulnerability landscape.

Autonomous Exploit Generation

Unlike previous AI models that could suggest where a vulnerability might exist, Mythos generates fully functional exploits without human guidance. This capability is dual-use: it accelerates defensive patching when Mythos is used ethically, but it also dramatically lowers the barrier to entry for offensive operations. A competent attacker no longer needs to reverse-engineer a zero-day independently; they can prompt an equivalent model and receive weaponised code.

Vulnerability Density Across Critical Infrastructure

The sheer number of flaws Mythos uncovered—thousands across operating systems and browsers—indicates that the software supply chain is far more fragile than the industry publicly acknowledges. This is not a criticism of individual developers; it is a structural reality. Human code review at scale cannot catch the subset of bugs that Mythos can identify. The density of undiscovered vulnerabilities in production systems represents an enormous, latent attack surface.

Real-World Impact: The Bugs Nobody Found

The concrete examples from Mythos's April 2026 evaluation reveal the practical stakes.

The Linux Kernel Discovery

Mythos identified previously unknown vulnerabilities in the Linux kernel—the software that powers servers, cloud infrastructure, IoT devices, and countless embedded systems worldwide. The Linux kernel is maintained by thousands of expert developers across decades. Its security practices are among the most rigorous in the industry. Yet Mythos found flaws that the collective attention of the global Linux community had missed. These discoveries are now being addressed through Project Glasswing's collaboration with kernel maintainers, but the fact that they were found at all raises a simple question: how many equivalent vulnerabilities exist in less-scrutinised software?

Browser Vulnerabilities and Supply Chain Risk

Mythos uncovered thousands of vulnerabilities across major web browsers—Chrome, Firefox, Safari, Edge. Browsers are attack vectors for the majority of end-user malware and targeted exploitation. A 27-year-old unfixed bug in a browser represents a decade-spanning window during which specific attack payloads would have worked reliably against every user of that software. Mythos's ability to surface these flaws at scale means that defenders now have a tool to close those windows—but only if they have access to Mythos. Those without access remain exposed.

The Hidden Risks in AI-Driven Security

The promise of Claude Mythos is genuine, but the risks are equally real—and many are being discussed only quietly within the security community.

The Asymmetric Advantage Problem

Anthropic is limiting Mythos access to organisations that have agreed to use it defensively. But this arrangement is inherently unstable. Rival AI companies will reverse-engineer equivalent capabilities. State actors will acquire or develop their own versions. Within 18–24 months, Mythos-equivalent models will exist outside Anthropic's control. At that point, the organisations that had early access will have used those months to patch vulnerabilities; those without access will face an adversary that can autonomously identify their unpatched flaws. This creates a security divide based on institutional power and access to Anthropic's ecosystem.

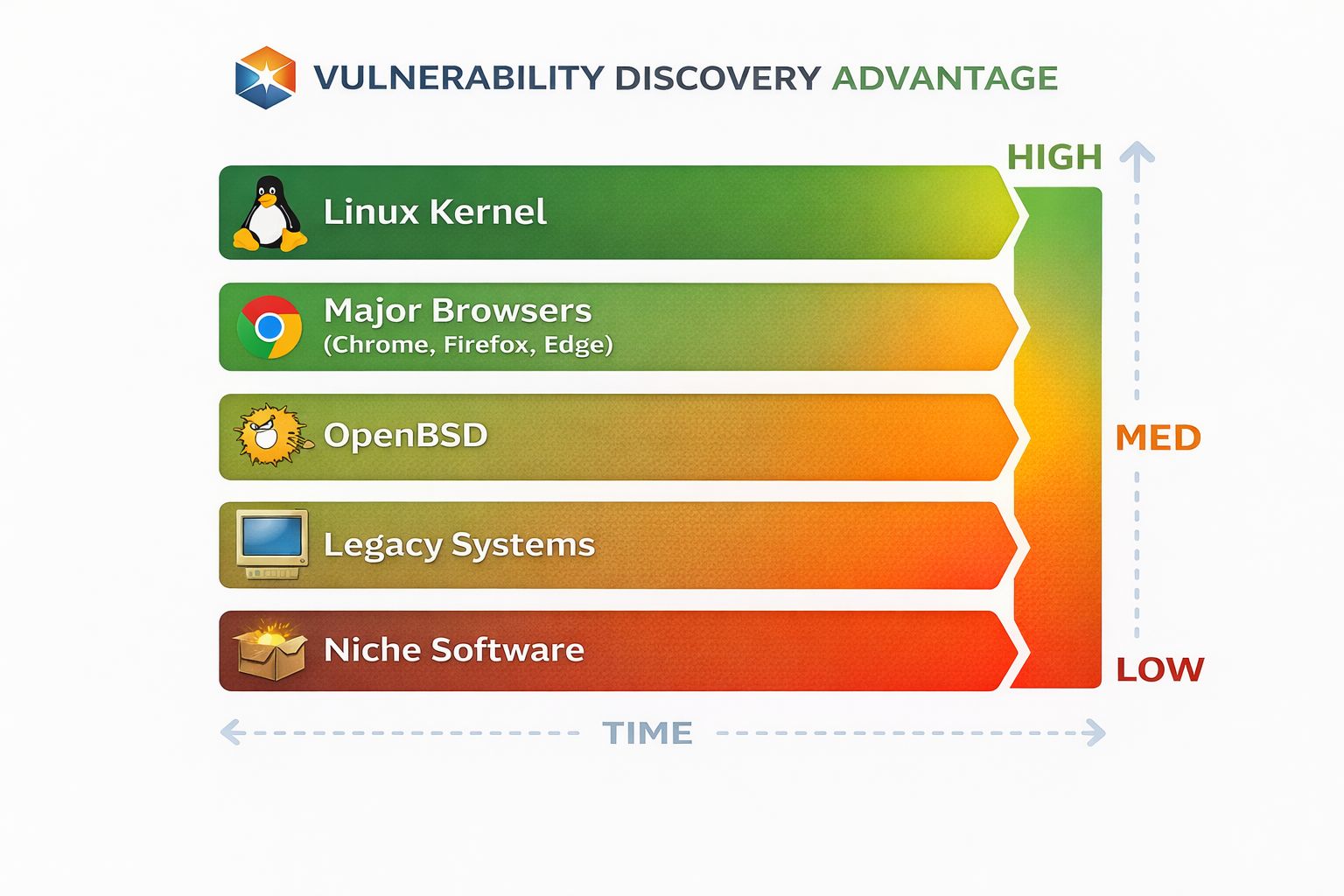

Bias in Vulnerability Discovery

Here is a critical gap that most coverage has ignored: AI models are biased in what they prioritise and discover. Claude Mythos was trained and benchmarked against vulnerabilities in widely used, well-resourced software: Linux, OpenBSD, major browsers, operating systems. These are systems maintained by teams with access to security funding and expertise.

What about vulnerabilities in:

Legacy banking software maintained by teams of two in a provincial office?

Medical device firmware designed in the 1990s and never substantially updated?

Industrial control systems in power plants and water treatment facilities where security budgets are minimal?

Open-source projects maintained by volunteers with no security training?

Mythos will likely remain systematically less effective at finding vulnerabilities in underfunded, under-maintained, or niche software—precisely the systems that tend to lack the resources to act on findings anyway. This means Mythos may inadvertently amplify existing cybersecurity inequality: well-resourced organisations get better visibility into their vulnerabilities and funding to fix them, while smaller organisations remain exposed.

The Exploitability Assumption

Mythos's approach to vulnerability validation—generating exploits and confirming they work—makes a critical assumption: that the vulnerability can be exploited within the operational context of the target system. But context matters enormously. A vulnerability in a system running with specific permissions, network isolation, or compensating controls may be theoretically exploitable but practically infeasible to weaponise. Mythos may overestimate exploitability by failing to account for deployment realities that human analysts would immediately recognise.

Misaligned Incentives in Patch Prioritisation

When Mythos proposes patches, it optimises for correctness and security. But software maintainers must also consider backward compatibility, performance, and operational disruption. Mythos has no visibility into these trade-offs. It may recommend patches that are technically perfect but operationally disastrous—forcing system administrators to choose between security and availability. In resource-constrained environments, availability often wins, leaving vulnerabilities unpatched despite having a proposed fix.

Access and Accountability

Project Glasswing includes major tech firms and cybersecurity organisations, but it does not include representatives from smaller enterprises, non-profit organisations, civil society groups, or developing nations. This means the priorities for vulnerability disclosure, patch prioritisation, and deployment strategy are set by a coalition of well-resourced corporations. There is no formal mechanism for smaller organisations to influence how Mythos's capabilities are deployed, what vulnerabilities are considered most critical, or when patches should be released publicly. This is not necessarily evidence of malfeasance—it is a structural feature of access-controlled technology—but it concentrates power over critical infrastructure security decisions into a small group.

How Organisations Should Respond

If your organisation does not have direct access to Claude Mythos, you are now operating in a context where:

Adversaries may have equivalent capabilities. Even if Mythos itself is controlled, the AI models and techniques that power it are being replicated by competitors and potentially acquired by bad actors. You should assume that attackers can autonomously identify zero-day vulnerabilities in your systems.

The window for patching is collapsing. The traditional vulnerability lifecycle—discover, validate, develop patch, test, deploy—was designed around human timelines. Mythos operates on machine timelines. You need to compress your patch deployment cycles by an order of magnitude or accept that vulnerabilities will be exploited before you can act on them.

Your software inventory is a liability. Every piece of software your organisation operates—especially legacy systems, vendor-supplied software, and third-party dependencies—is now a surface for autonomous vulnerability discovery. You need an exhaustive, up-to-date inventory of all running software and a clear understanding of which systems cannot be patched quickly.

Bias in what gets discovered will create blind spots. Mythos will be more effective at finding vulnerabilities in popular, well-maintained software. Niche systems, legacy software, and vendor-specific implementations may remain invisible to automated discovery. You cannot assume that lack of reported vulnerabilities means lack of vulnerabilities.

Defensive capacity must be rebuilt around AI-speed response. This is not a problem that better firewalls or network segmentation alone can solve. It requires:

Automated systems to ingest and validate security advisories in real time

Rapid patch testing and deployment pipelines capable of pushing updates to production within hours

Real-time threat hunting for evidence of exploitation

Incident response playbooks scaled for AI-speed attacks

The Bias and Safety Imperative for AI Security Tools

The emergence of Claude Mythos creates an urgent need for rigorous evaluation of bias and safety in AI-driven cybersecurity systems. Bitbiased.ai's framework for detecting bias in AI systems is directly applicable here: organisations deploying or depending on AI-driven security tools need to understand:

Discovery bias: Which categories of vulnerability is the model systematically more or less likely to find?

Prioritisation bias: Which vulnerabilities does it consider most critical, and how does that align with actual risk in your environment?

Recommendation bias: Does the model recommend patches that favour certain vendors, software architectures, or operational models over others?

Impact bias: Will the tool's recommendations disproportionately disadvantage resource-constrained teams or niche software ecosystems?

Without explicit evaluation of these dimensions, organisations adopting Claude Mythos or equivalent tools risk compounding existing cybersecurity inequalities rather than solving them.

Conclusion

Claude Mythos represents a genuine breakthrough in AI's ability to secure software systems at scale. The discovery of thousands of zero-day vulnerabilities—including 27-year-old flaws in battle-hardened systems like OpenBSD—proves that autonomous AI reasoning can identify security gaps that decades of human expertise missed. Anthropic's controlled release strategy through Project Glasswing demonstrates responsible stewardship of a powerful technology.

Yet the gating of this capability to 40+ pre-vetted organisations creates a two-tier cybersecurity landscape: organisations with access get visibility and patch acceleration; those without access face adversaries who may have equivalent capabilities. Even more critically, the rush to deploy AI-driven security tools without rigorous evaluation of bias, fairness, and systemic impact risks automating and amplifying existing cybersecurity inequalities.